Most customer support teams have already deployed AI in some form. The challenge now is no longer adoption. It’s improvement.

Static AI experiences quickly fall behind customer expectations. The teams seeing the strongest results are the ones building systems that continuously learn, adapt, and improve over time.

As AI becomes a larger part of the customer experience, support organizations need reliable ways to safely update workflows, test changes, and measure outcomes without creating unnecessary risk or operational overhead.

That’s why the most effective AI strategies are no longer centered around simply deploying automation. They are centered around continuous improvement.

At Forethought, we believe AI should improve with every interaction. Every workflow update, experiment, and customer interaction becomes part of a larger learning loop that helps AI agents become smarter, faster, and more effective over time.

But self-improving AI does not happen automatically. Teams need the operational systems to safely test changes, measure outcomes, and continuously optimize how AI agents behave across channels.

That starts with Autoflow Versioning.

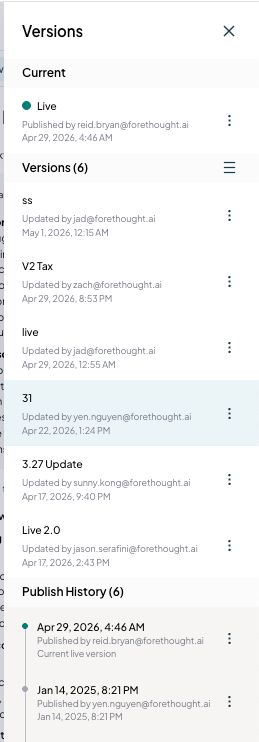

Versioning gives teams a safer way to manage and deploy workflow changes. Instead of editing live workflows directly, teams can create named versions, review publish history, preview updates before deployment, and restore earlier versions when needed.

This helps teams safely iterate on AI experiences without disrupting live customer interactions and creates the operational safety layer required for continuous improvement.

But safely managing updates is only part of the equation. Teams also need to understand which experiences actually perform best for customers.

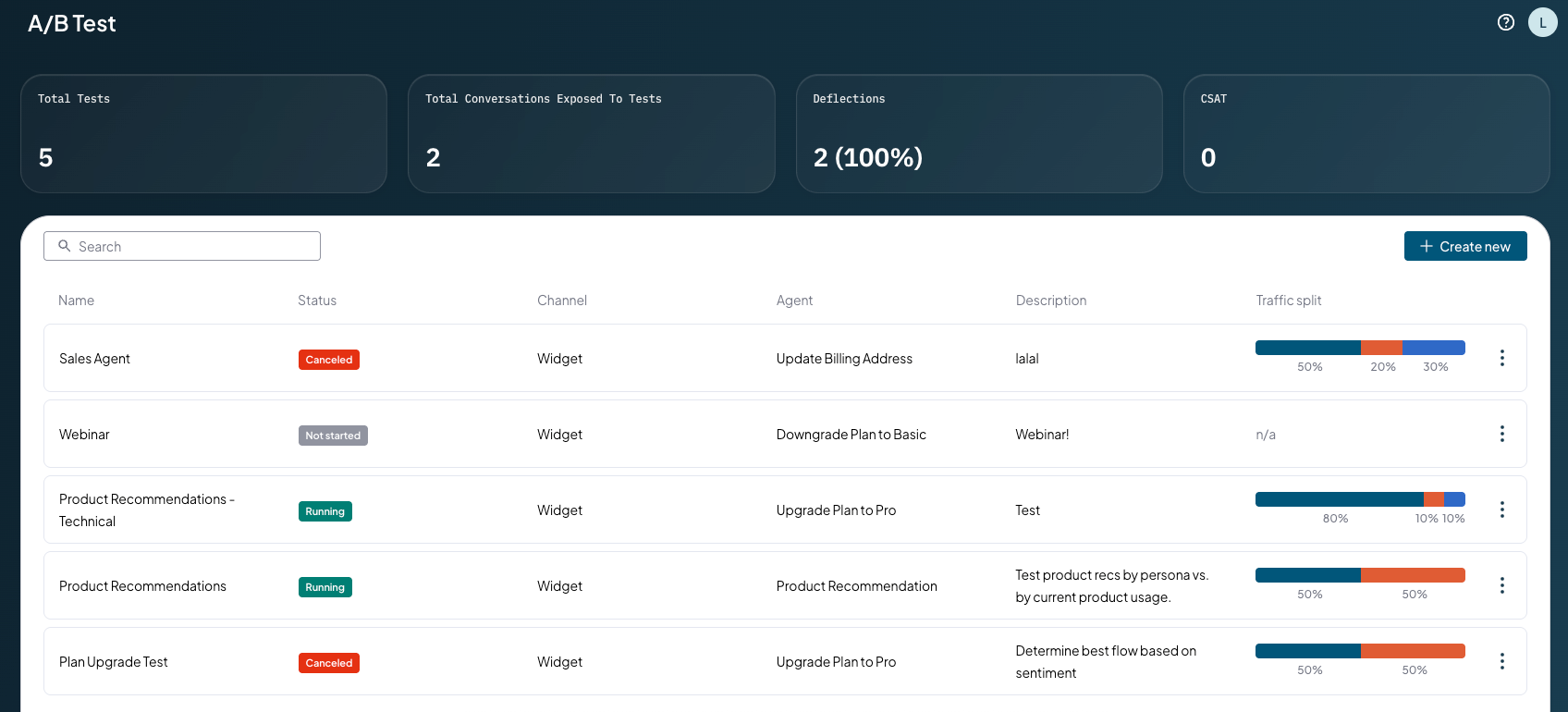

That’s where A/B Testing becomes critical.

A/B Testing allows teams to compare Autoflow experiences using real customer traffic across Widget, Voice, Slack, and API channels. Teams can split traffic between a live control and multiple variants, then measure performance using metrics like deflection, CSAT, sentiment, relevance, and engagement.

Instead of relying on assumptions, support teams can make statistically informed deployment decisions based on real customer outcomes.

Together, Versioning and A/B Testing create the foundation for self-improving AI agents. Teams can safely introduce workflow changes, measure performance, and continuously refine experiences over time. Every interaction becomes a new signal that helps improve future resolutions.

The companies seeing the strongest AI results in CX are not treating AI as a one-time deployment. They are treating it as a system that evolves continuously through testing, learning, and optimization.

The future of AI in CX will not belong to the teams that simply deploy AI first. It will belong to the teams building systems that continuously learn, adapt, and improve with every interaction.

Want to see Versioning or A/B testing in action? Talk to your Forethought team, or reach out to get started.

Hashtags blocks for sticky navbar (visible only for admin)

{{resource-cta}}

{{resource-cta-horizontal}}

{{authors-one-in-row}}

{{authors-two-in-row}}

{{download-the-report}}

{{cs-card}}

.png)